Silicon Valley just got another wakeup call from Hangzhou. While American tech giants are busy spending a projected $650 billion this year on massive data centers, DeepSeek just dropped its V4 series. It's a blatant reminder that throwing money at a problem isn't the only way to win the AI race. If you thought last year’s R1 launch was a fluke, you haven’t been paying attention.

The new V4 Pro and V4 Flash models aren't just incremental updates. They represent a massive shift in how we think about efficiency. DeepSeek-V4-Pro is a 1.6-trillion parameter beast that’s holding its own against the likes of Gemini 3.1, yet it was built with a fraction of the budget OpenAI or Google commands. It’s lean, it’s fast, and frankly, it’s making the "bigger is better" crowd look a bit silly.

What DeepSeek V4 Actually Brings to the Table

The standout feature here is the context window. Both V4 Pro and Flash support 1 million tokens. That’s not a typo. You can feed this thing a dozen textbooks or a massive codebase, and it won't break a sweat. They’ve achieved this through something they call Hybrid Attention Architecture.

In simple terms, most AI models start "forgetting" the beginning of a conversation as it gets longer. They get confused or lose the thread. DeepSeek’s new setup allows the model to keep a much tighter grip on long-horizon tasks. If you're a developer trying to debug a complex system or a researcher analyzing thousands of pages of data, this is the hardware-software optimization you've been waiting for.

The Power of Flash

Don't sleep on the V4 Flash version either. At 284 billion parameters, it’s designed for speed. In the world of agentic workflows—where AI actually does things rather than just talking—latency is the enemy. Flash is built to be the "brain" for these autonomous agents. It's fast enough to handle real-time decision-making without the agonizing pauses we’ve grown used to with older flagship models.

Why the $650 Billion Bet is Shaking

Last year, when DeepSeek R1 arrived, it caused a literal panic in the stock market. Investors started asking why companies like Nvidia were worth trillions if a "small" Chinese startup could mimic human reasoning on a budget. Today, that tension is back.

DeepSeek isn't just playing the game; they're changing the rules. By releasing their model weights under an MIT license, they’ve become the darling of the open-source community. Thousands of companies are now deploying DeepSeek through platforms like Amazon Bedrock because it’s cheaper and often better at coding than the "premium" alternatives.

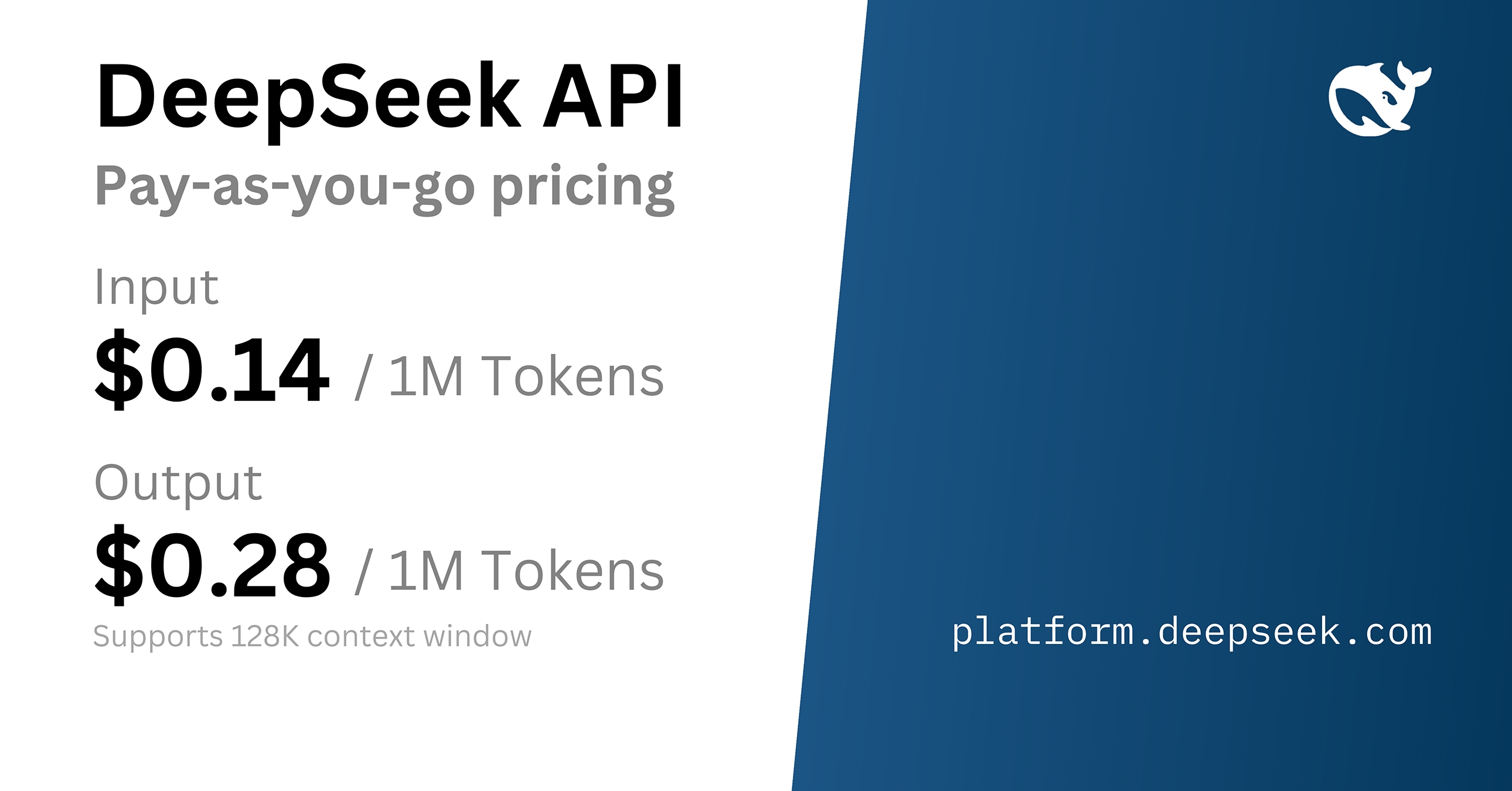

- Cost: DeepSeek V4 is roughly 90% cheaper to run than GPT-4o.

- Performance: It leads almost all open-source benchmarks and rivals the top-tier closed models in world knowledge.

- Access: Because it's open-source, you can run it on your own infrastructure. No more worrying about data privacy or "black box" API changes.

The Hardware Secret Sauce

There’s a lot of talk about how China is handling the chip bans. DeepSeek is being surprisingly transparent about it. They’ve built their software to work on both Nvidia and Huawei silicon. While they admit they’re currently limited by raw computing capacity, they're already eyeing Huawei’s Ascend 950PR systems to scale up later this year.

This multi-chip strategy is a hedge against geopolitics. It means DeepSeek doesn't need the latest US-made H100s to stay competitive. They’re squeezing every bit of performance out of the hardware they have, which is a lesson in engineering that most Western firms haven't had to learn yet because they could always just buy more chips.

How to Use This in Your Workflow

If you're still paying top dollar for every API call, you're losing money. DeepSeek V4 is a prime candidate for "routing." You don't need a $100-per-million-token model to summarize an email or format a JSON file.

- Move your technical tasks first. DeepSeek is arguably the best coding assistant on the market right now. Point your dev tools at the V4 Pro API.

- Use Flash for agents. If you’re building a customer service bot or an autonomous research agent, the V4 Flash model offers the best balance of speed and reasoning.

- Self-host for privacy. If you handle sensitive data, pull the weights from Hugging Face and run them on your own servers. You get GPT-level performance without your data ever leaving your firewall.

The era of expensive, gated AI is ending. DeepSeek proved that intelligence can be commoditized. The question isn't whether their models are "as good" as the ones from San Francisco. The question is why anyone would keep paying ten times more for a difference that's becoming harder to see every day. Start migrating your heavy-duty workloads to the V4 API now—your budget will thank you.