Microsoft has quietly inserted a legal wedge between its marketing promises and its legal obligations. While the company positions Copilot as a revolutionary productivity tool capable of rewriting the corporate world, its service terms tell a different story. Deep within the fine print, the tech giant classifies its flagship AI as "entertainment only." This isn't a mere quirk of legal drafting. It is a calculated move to shift the risk of AI-generated errors, hallucinations, and professional negligence entirely onto the user. By framing a tool meant for coding, data analysis, and executive communication as a toy, Microsoft is attempting to enjoy the profits of the AI boom without accepting the responsibility that comes with being a primary software provider.

The core of the issue lies in the disconnect between the shiny advertisements showing Copilot managing complex enterprise workflows and the "as-is" reality of the Service Agreement. If a professional uses the tool to calculate financial projections and the software invents a decimal point error that costs a firm millions, Microsoft’s legal defense is already written. They told you it was for fun. This creates a dangerous precedent in the software industry where the utility of a product is disconnected from its reliability.

The Strategy of Managed Expectation

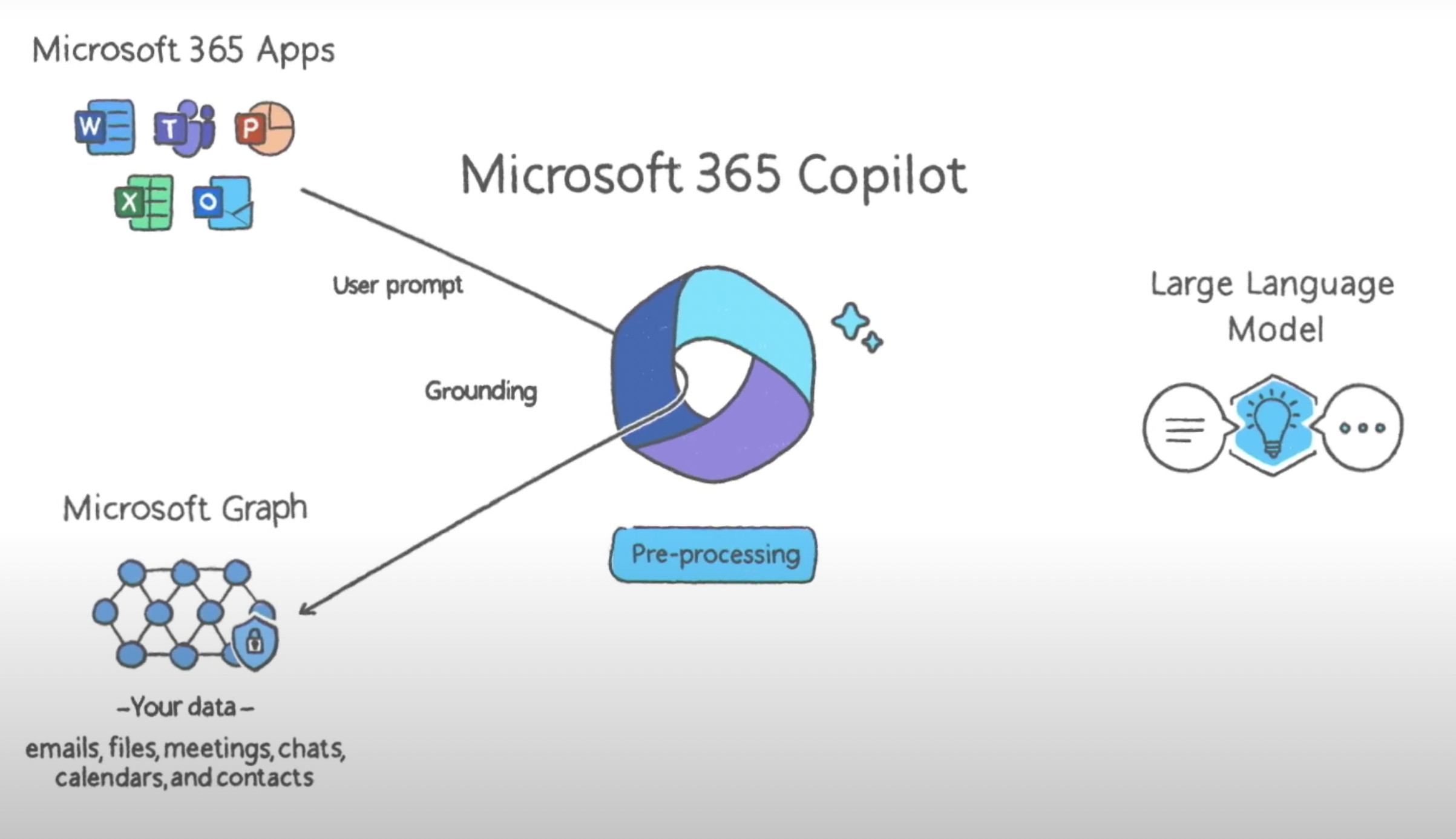

Software companies have used End User License Agreements (EULAs) to dodge liability for decades. However, the generative AI era has forced a more aggressive approach to these disclaimers. Unlike a traditional spreadsheet program—which follows rigid, predictable logic—large language models are probabilistic. They guess the next word in a sequence. Because Microsoft cannot guarantee the accuracy of a guess, they have opted to categorize the entire output as non-professional.

This "entertainment" tag serves as a universal solvent for accountability. If the AI provides medical advice that is dangerously wrong, or legal citations that do not exist, the company can point to the terms and claim the user was warned. It is a staggering admission of instability for a product that costs enterprise customers $30 per user every month. You do not pay enterprise prices for a novelty act. You pay for a tool that works.

Why the Entertainment Label Matters

In a courtroom, words have specific gravity. "Productivity tool" implies a standard of care and fitness for a particular purpose. "Entertainment" implies that the primary value is the experience, not the result. By choosing the latter, Microsoft effectively tells its users that any work produced by Copilot should be treated with the same skepticism as a script for a science fiction movie.

Consider the hypothetical example of a mid-sized law firm using Copilot to summarize depositions. If the AI misses a crucial admission of guilt or hallucinates a witness statement, the firm faces malpractice. Microsoft, meanwhile, remains insulated. They have successfully offloaded the cost of quality control to the customer. This is a brilliant business move, but it is a disastrous foundation for the future of professional software.

The Ghost in the Machine

The technical reality of these models makes them inherently "un-software-like." Traditional software is deterministic. If you press 'A', you get 'A'. Generative AI is stochastic. If you ask for 'A', you might get 'A', a blurry version of 'B', or a confident lie about 'C'. Microsoft knows this. Their engineers know this. Their lawyers definitely know this.

The backlash from users isn't just about the words in the contract; it’s about the bait-and-switch. Marketing teams talk about "unleashing" human potential, while the legal teams are busy building a cage around the company’s liability. This creates a trust gap that could stall the adoption of AI in high-stakes industries. If a surgeon or a structural engineer cannot rely on the data provided by their interface, the interface is a liability, not an asset.

The Myth of the Copilot

The very name "Copilot" suggests a partnership. In aviation, a copilot is a qualified professional responsible for the safety of the flight. If a human copilot makes a mistake, they are held accountable. Microsoft’s version of a copilot wants the seat in the cockpit but refuses to touch the controls when the plane starts to dive. It is an assistant that claims no mastery.

This branding is intentional. It suggests that the "Pilot"—the human—is always in command and therefore always at fault. If you didn't check the AI's work, that's on you. If you did check it and missed an error, that's also on you. The machine is merely a high-speed suggestion engine that bears no burden of truth.

Regulatory Blind Spots and the Race to the Bottom

Government regulators are currently playing catch-up with the speed of AI deployment. While the European Union’s AI Act attempts to categorize systems by risk level, Microsoft’s "entertainment" clause is a clever way to sidestep these classifications. If a tool is labeled as entertainment, it might bypass the rigorous testing required for "high-risk" applications in sectors like HR, education, or essential services.

This creates a race to the bottom. If Microsoft can avoid liability by using specific language, Google, Meta, and OpenAI will follow suit. We are entering an era where the most powerful tools in human history come with the same legal weight as a crossword puzzle.

The Enterprise Dilemma

Chief Information Officers (CIOs) are in a difficult position. They are under immense pressure to integrate AI to keep up with competitors. Yet, they are signing contracts that explicitly state the software they are buying isn't reliable for professional tasks.

- Risk Transfer: Firms are essentially becoming beta testers for Microsoft, paying for the privilege of identifying the model's flaws.

- Data Integrity: If the output is "entertainment," the data generated cannot be trusted as a "record of truth" for audits or compliance.

- Insurance Gaps: Many professional liability insurance policies require the use of industry-standard, reliable tools. An "entertainment" bot may not qualify.

The Architecture of Deception

To understand why this is happening now, look at the compute costs. Running these models is staggeringly expensive. Microsoft has invested billions into its partnership with OpenAI and its own Azure infrastructure. To see a return on that investment, they need massive, rapid adoption. They cannot afford the slow, methodical rollout that a "professional grade" tool would require.

By lowering the legal bar to "entertainment," they can ship updates faster. They don't have to wait for the model to be 99.9% accurate if the terms say it doesn't have to be accurate at all. Speed has replaced stability as the primary metric of success in Redmond.

The Hallucination Tax

Every time a user has to double-check an AI’s work, they are paying a "hallucination tax." This is the time and mental energy spent verifying that the machine hasn't lied. If a tool is truly for entertainment, this tax is part of the fun. If it’s for work, it’s a hidden cost that erodes the promised productivity gains.

We are seeing reports of "AI fatigue" in the workplace. Employees are finding that cleaning up after the AI takes as long as doing the task themselves. This is the natural result of trying to force an entertainment-grade engine into a professional-grade chassis.

Breaking the Terms of Service

What happens when the first major lawsuit hits? Eventually, a company will suffer a catastrophic loss due to a Copilot error. They will sue Microsoft, claiming that the "entertainment" clause is unconscionable given how the product was marketed. Microsoft will point to the click-wrap agreement the IT manager signed.

The courts will have to decide if a company can market a product as an essential business tool while legally defining it as a toy. Historically, courts have been protective of companies in software liability cases, but the scale and autonomy of AI change the math. This isn't just a bug in a line of code; it is a system that generates new, unpredictable content.

The Path to Accountability

If Microsoft wants Copilot to be the "UI for the world," they must eventually stand behind its output. This would require:

- Professional Certification: Tiered versions of AI that come with accuracy guarantees for specific industries.

- Indemnification: Expanding current copyright indemnity to cover certain types of factual or functional errors.

- Transparent Error Rates: Providing users with a "confidence score" for every output, backed by technical documentation.

None of these are currently on the table. Instead, we have a $3 trillion company telling its customers to have fun with their $30-a-month chatbot and don't blame the manufacturer when it breaks.

The Future of Professional Standards

The legal hedging by Microsoft is a signal that the technology is not nearly as mature as the marketing suggests. We are being sold the future, but the warranty only covers the present-day reality of a temperamental, unpredictable statistical model.

For the professional who relies on accuracy, the message is clear. You are on your own. The software on your screen is a ghost, a mirage of intelligence that vanishes the moment a lawyer enters the room. Until the industry moves past the "entertainment" shield, AI will remain a high-stakes gamble disguised as a desktop icon.

The burden of proof has been shifted. The risks have been externalized. The profits have been internalized. Microsoft has built an incredible machine, but they are clearly terrified of what happens when people actually trust it.